Lessons from building a bit-parallel SIMD simulator for a RISC-V CPU

The Setup

We’re building a bit-parallel simulation engine for Verilator, the open-source Verilog simulator. The idea: instead of evaluating a circuit signal-by-signal, group thousands of independent 1-bit operations (AND, OR, XOR, NOT, MUX) into SIMD batches. One 64-bit word operation computes 64 independent logic gates simultaneously.

Our test target is the CVA6, a 6-stage RISC-V CPU with ~570K signal bits. The bit-parallel engine decomposes the entire combinational logic into ~514K bit-level operations organized in ~250 batches, executed via a wavefront scheduler.

After a long series of optimizations, we reached 1.45× speedup over Verilator’s standard eval path (22.7 kHz vs 15.7 kHz simulation clock). Then we discovered something interesting in the profiling data.

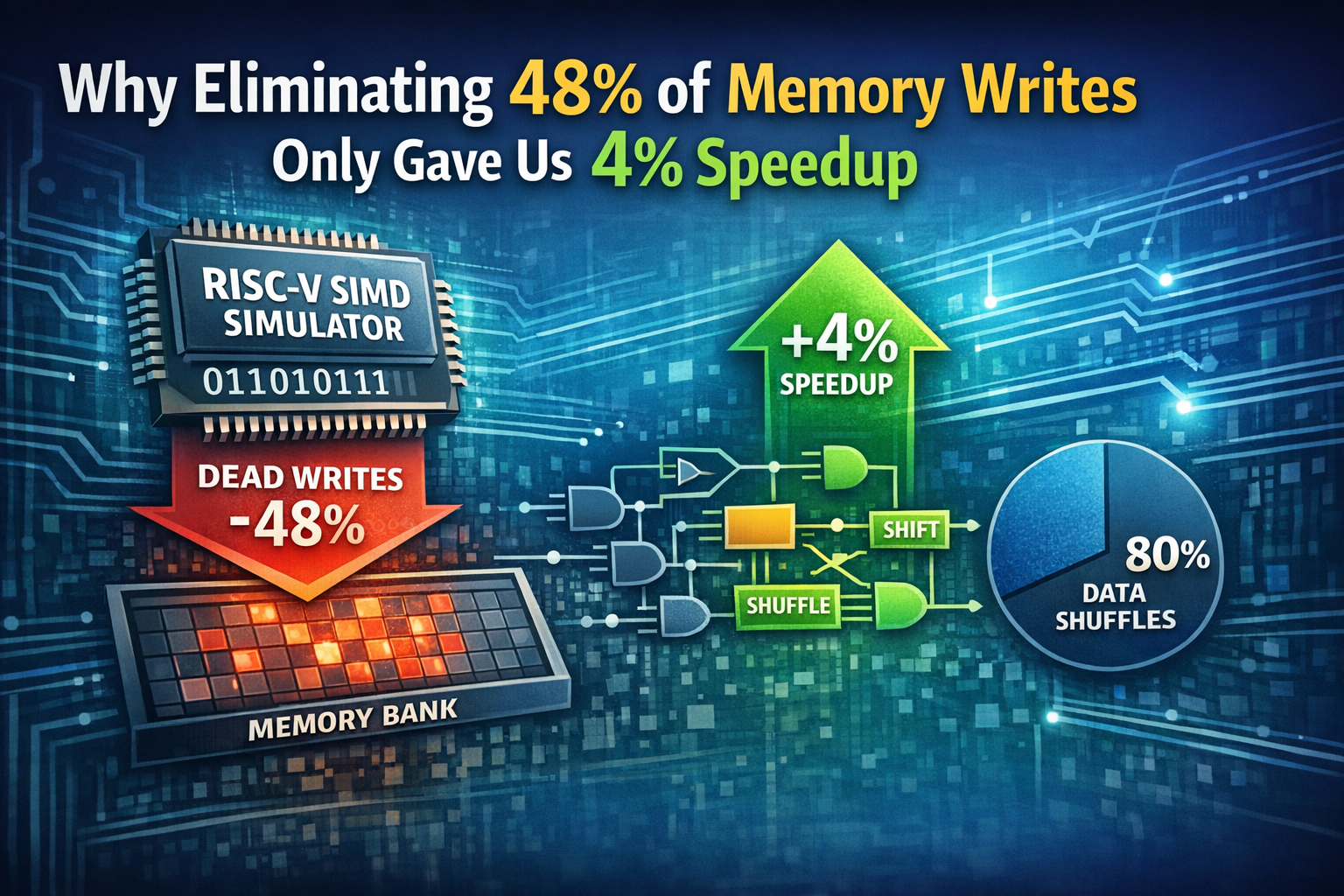

The Discovery: 48.8% Dead Writes

Our engine uses a flat uint64_t bank (~9,500 words, 76 KB) where all signal values live. Each batch reads its inputs from the bank, computes, and writes results back. An earlier optimization — expression fusion — inlines single-consumer intermediate results directly into the consumer’s compute expression, bypassing the bank entirely.

But here’s the thing: the fused producer batches were still writing their results to the bank, even though nobody reads them. A quick analysis revealed:

- 16,779 total bank write lines in the generated code

- 8,181 of them write to words that are never read (48.8%)

- These dead writes come from producer batches whose outputs are consumed exclusively via fusion

Half of all memory writes were provably useless.

The Fix

Simple: before emitting a bank write, check if the output word has exactly one consumer AND that consumer fuses the expression inline. If so, skip the write.

if (isDeadWrite(batch.outSegBase + dw)) continue; // consumer fuses inlineThe Disappointment

Result: 1.49× speedup. Up from 1.45×. Only +4%.

We eliminated 48.8% of all bank writes and got 4% speedup. Where did the other 44.8% go?

Why Writes Are (Almost) Free

The answer is store buffers. Modern x86 CPUs have deep store buffers (typically 56–72 entries on recent Intel/AMD cores) that absorb writes without stalling the pipeline. A mov [mem], reg instruction retires in 1 cycle regardless of whether the cache line is hot, cold, or even evicted — the store buffer holds the write until the cache hierarchy can absorb it.

Our bank is 76 KB, which fits in L1 cache (typically 32–48 KB per core for data, but the working set is smaller because we access it sequentially). Even when writes miss L1, the store buffer absorbs them. The CPU only stalls when the store buffer is full, which requires ~60 consecutive writes without any intervening loads that could drain it.

In our generated code, writes and reads are interleaved (each batch reads inputs, then writes output), so the store buffer never fills up. The dead writes were hiding in the buffer, costing essentially zero pipeline cycles.

What Actually Costs Time

Our profiling revealed the real bottleneck: shuffles. 80% of the generated code is shuffle operations (pext/pdep, shift+mask) that rearrange bits from scattered source positions into contiguous batch input words. Only 20% is actual compute (AND, OR, XOR on 64-bit words).

The shuffle cost comes from the data layout: each batch combines operations from different signals across the entire CPU. Input bits are scattered across the bank. Even after our fixpoint layout optimization (which reduced shuffle cost by 18%), 97% of gathers still require bit-level permutation.

This is the fundamental tension: batching operations for SIMD parallelism requires bringing together bits from different signals, but those signals live at different bank positions.

Performance Timeline

| Optimization | Speedup | Note |

|---|---|---|

| Initial correct implementation | 1.28× | Wavefront scheduler, zero-copy reads |

| + Expression fusion | 1.32× | Inline single-consumer intermediates |

| + Fixpoint input-sort | 1.45× | Global shuffle cost minimization |

| + Dead write elimination | 1.49× | Skip writes for fused outputs |

The biggest single gain came from the fixpoint sort (+13%), not from reducing writes or compute.

Lessons Learned

- Writes are cheap, reads are expensive. On modern CPUs with deep store buffers, writes to hot cache lines are essentially free. Focus optimization on reducing reads, especially scattered reads that cause cache misses.

- The shuffle is the bottleneck, not the compute. In any data-parallel system where the parallelism comes from combining independent operations, the cost of gathering inputs dominates the cost of computing on them. This is well-known in GPU programming but equally applies to CPU SIMD.

- Local optimizations can hurt globally. Our per-batch input sort improved local shuffle cost but degraded downstream batches (whose input homes changed). Only the fixpoint iteration — which accepts changes only when the global cost decreases — found the right balance.

- Measure before optimizing. We spent time on predicated execution (dark silicon pruning) which showed neutral results with random test data. The shuffle analysis immediately pointed to the real bottleneck.

What’s Next

- Store buffer exploitation: Since writes are free, we could speculatively write intermediate results to the bank even when they might be dead — trading bank space for simpler code generation.

- Layout graph coloring: Place signals that are frequently read together into adjacent bank words, reducing cache line crossings.

- AVX-512: The current 64-bit word size limits us to 64 parallel operations. AVX-512 would give 512, but requires careful alignment and the shuffle problem becomes even more critical.

- Multithreading: Independent batch clusters could run on separate cores, with the bank in shared memory. The wavefront structure naturally provides a dependency graph for task scheduling.

The Code

The bit-parallel engine is implemented as a Verilator --bit-parallel flag across ~2,500 lines of C++ in the Verilator compiler (scheduler, layout optimizer, code emitter) plus the generated code (~127K lines for CVA6). All standard Verilator regression tests pass.

The full implementation evolved over 22 commits in a single development session, starting from a broken 512-instance model and ending with a working 1.49× speedup on a real RISC-V CPU design.

Comments are closed